Category: SharePoint

Managing concurrent tenant-wide and site collection-scoped SharePoint Framework deployments

I was recently asked a question on the discussion forum for my Pluralsight course “Creating Deployment Packages for SharePoint Framework Projects” about how to manage concurrent tenant-wide and site collection-scoped deployments for SharePoint Framework projects, specifically web parts. As you may know, SharePoint offers site collection app catalogs as a way to decentralize the management of SharePoint Framework solutions (as well as Add-ins built using the Add-in model…is anyone out there still building these?) and scope the deployment of a solution to a single site. This may be useful if you want to leverage separate site collections for staging and testing before deploying a solution to your tenant’s production app catalog. But what if your staging and testing site collections reside within the same production tenant? Will there be duplicate entries for your web part — one for the site collection-scoped deployment and another for the tenant-wide deployment? How does SharePoint manage this?

The site collection-scoped deployment always takes precedence

As long as a version of your solution package’s .sppkg file is uploaded to a site collection’s app catalog, the assets associated with that version will be the only version available to you within that site collection, regardless of whether or not a newer version is deployed tenant-wide. This is true even if you do not currently have the app associated with your solution package installed in that site collection. To demonstrate this, I created a simple web part project based on the Yeoman generator’s HelloWorldWebPart that simply renders TEST. I packaged and deployed this solution to a site collection app catalog, installed it within my TEST site collection using the “Add an app” link, then added my web part to a page:

After that, I created a new version of the solution that updates the web part to render PROD instead. I packaged and deployed this new version tenant-wide from my tenant’s app catalog and added it to a page outside my TEST site collection:

I then went back to my TEST site collection and confirmed that I could still only see one instance of the “ScopeTest” web part in that site:

Adding it to the page again confirmed that this was indeed still my TEST web part:

How can I access the version that is deployed tenant-wide from this site?

One might assume that in order to have access to the tenant deployed PROD version of my web part from my TEST site collection, all I would need to do is remove the installed app instance associated with my TEST web part from that site. However, after I did this, I went to add my “ScopeTest” web part to the page again (expecting the tenant deployed version to be available) and found that it wasn’t there for me to add at all:

It turns out that I had to first delete my TEST web part solution’s .sppkg file from my site collection app catalog. After I did that, I returned to the home page of the site and noticed my TEST web part instances had been replaced with PROD instances automatically:

Application customizer extensions behave a little differently

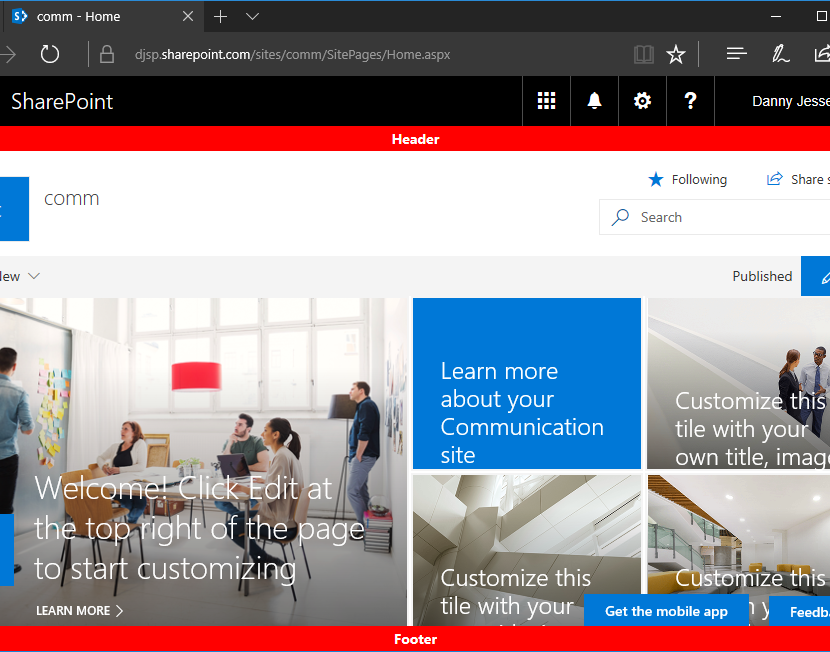

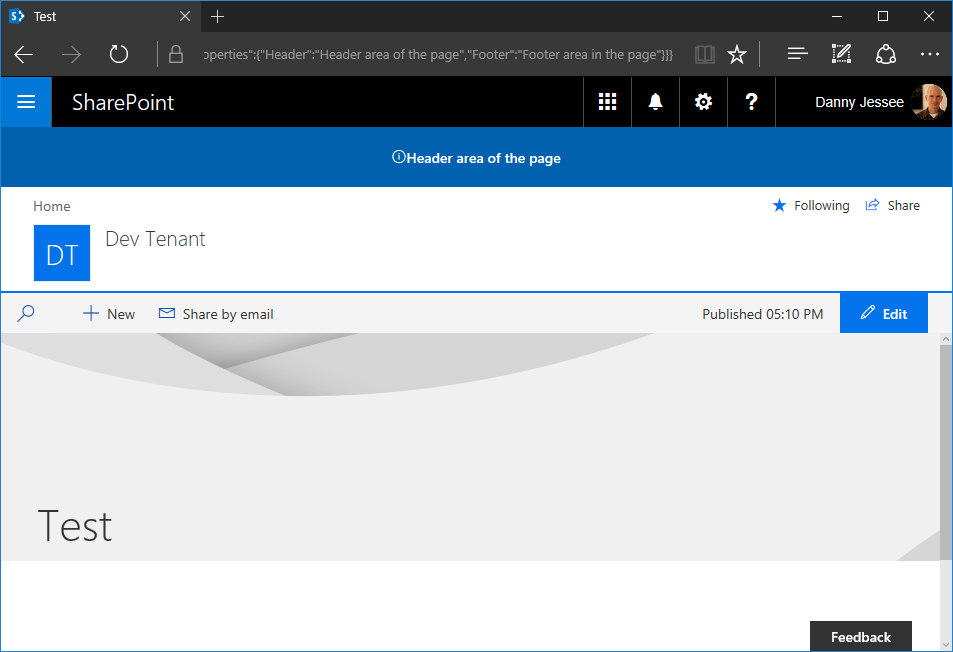

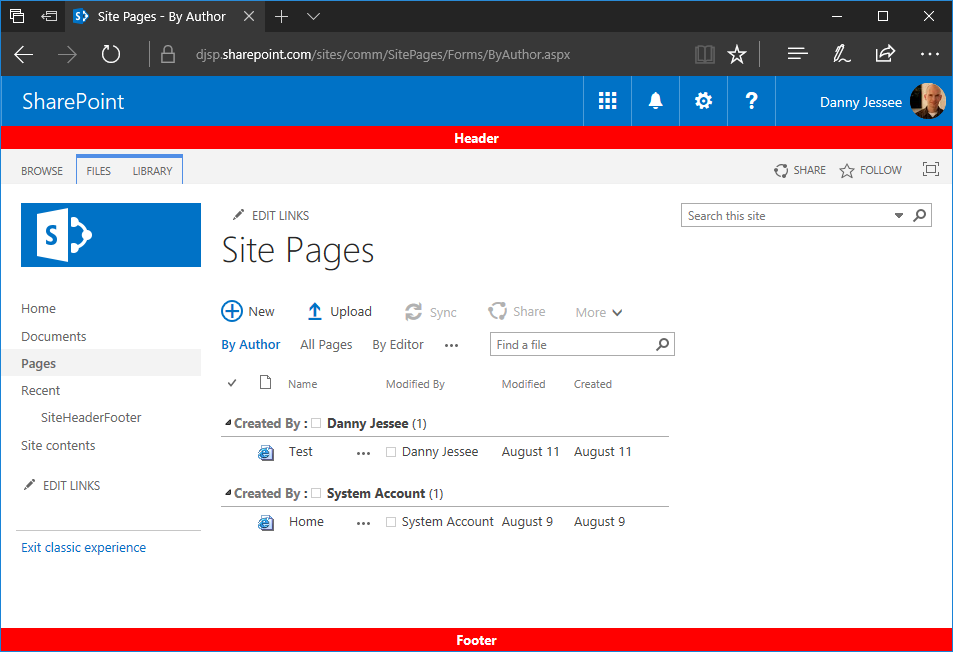

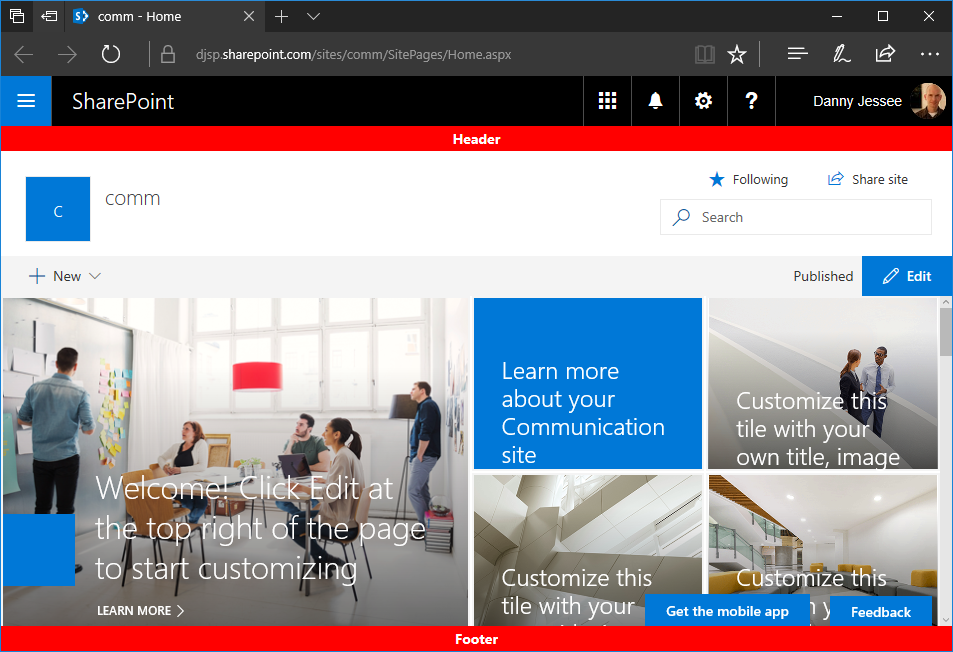

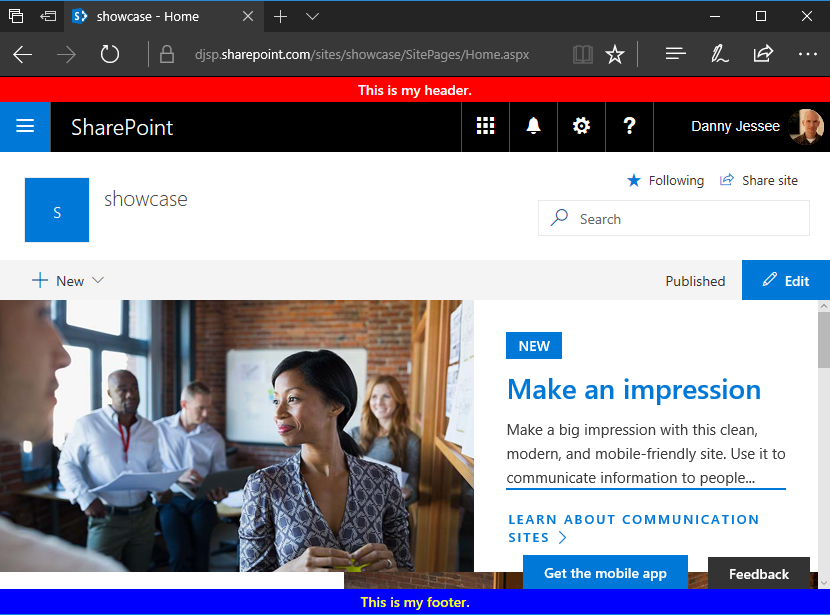

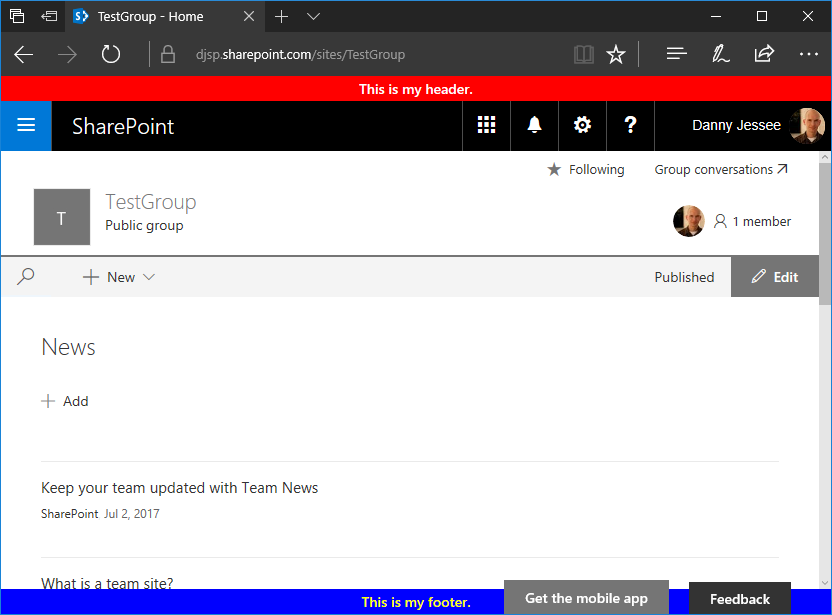

I performed the same steps with an application customizer extension that renders a simple TEST or PROD header in the “Top” placeholder on the page. I began by deploying the TEST version to my TEST site collection. After installing the app to the site, I saw the TEST header I expected to see:

After that, I created a new version of the solution and updated the extension to render PROD on a red background instead. I packaged and deployed this new version tenant-wide from my tenant app catalog and it appeared on this page outside my TEST site collection:

But when I went back to my TEST site collection, I saw something different:

It appears that in addition to the initial site collection-scoped deployment, the site also picks up the reference to the tenant deployed extension from the Tenant Wide Extensions list in the tenant’s app catalog site, which causes it to render the version of the extension from the site collection app catalog again.

And similar to the behavior I saw after removing the installed app instance associated with my TEST web part from that site collection, uninstalling the app instance associated with my TEST extension (but leaving the .sppkg file in the site collection app catalog) caused no extensions to be rendered on the page — neither the site collection-scoped version nor the tenant deployed version:

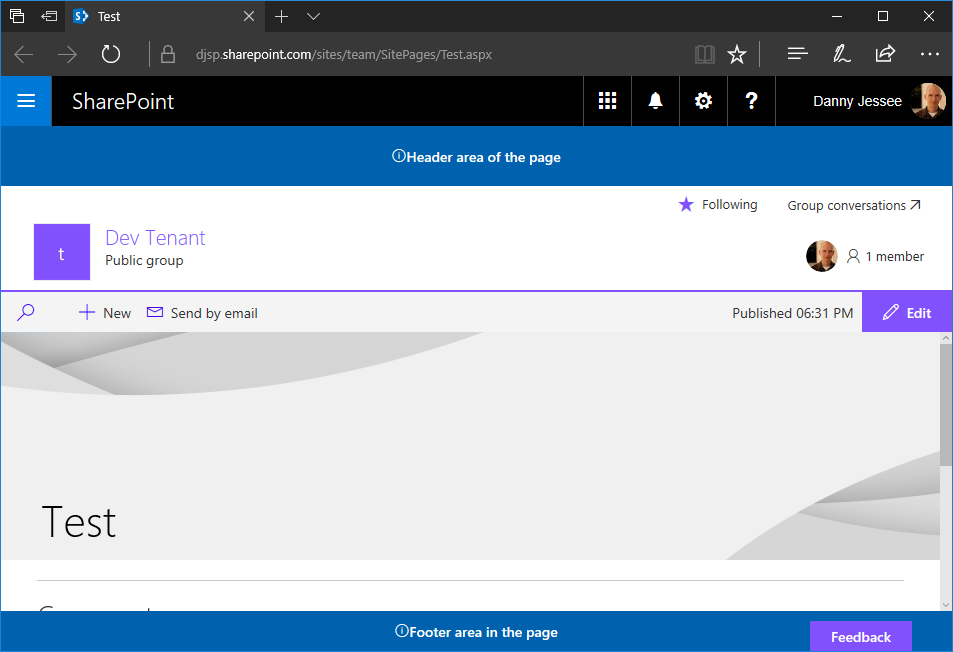

Once again, I had to delete my TEST extension solution’s .sppkg file from the site collection app catalog. After I did that, I returned to the home page of the site and saw the PROD extension was now being rendered:

Final thoughts

Once you learn the nuances of how SharePoint deconflicts site collection-scoped and tenant-wide deployments for SharePoint Framework solution packages, you can determine which approach works best for you when managing the two types of deployments concurrently for a solution package within a single tenant. If possible, you might consider configuring tasks in your CI/CD pipeline to uninstall and remove any solution packages from site collection app catalogs before they are deployed tenant-wide. You can learn more about CI/CD pipelines for enterprise SharePoint Framework development in my Pluralsight course “Scaling up SharePoint Framework Development for Enterprises.”

Rendering a single SharePoint page in IE using Edge mode

Update 3/30/2020: I added one additional check when redirecting out of the child iframe to ensure the parent iframe is ie.aspx. This prevents issues when launching other pages that also load child iframes, such as the file upload dialog (Upload.aspx) in a document library.

I recently ran into an issue supporting an old SharePoint 2013 farm where a user had embedded a third-party GIS tool’s dashboard on a web part page using a Page Viewer web part. Unfortunately, the dashboard would not load properly in an iframe when IE’s document mode was set to version 10 (as it is by default in seattle.master):

Fortunately, the dashboard would load just fine when IE’s document mode was set to Edge instead:

Problem solved, right? Not so fast…turns out making this change site-wide via the master page breaks important functionality like Open with Explorer!

I needed to find a way to set IE’s document mode to Edge, but just on that one page. Thankfully, Paul Tavares developed this outstanding workaround, which also works great when you don’t have access to make changes to the master page. His post explains in depth how this works, but in short it loads the offending page in a full-screen iframe from a parent page (ie.aspx) that sets the document mode to IE=Edge. Since IE obeys the first X-UA-Compatible meta tag it encounters, it will render the SharePoint page in IE=Edge document mode, even though the master page is still set to IE=10.

I added a Script Editor web part to the dashboard page to detect IE and redirect the user to the special ie.aspx page accordingly:

// Detect Internet Explorer and redirect to ie.aspx

if (navigator.userAgent.indexOf('MSIE') !== -1 || navigator.appVersion.indexOf('Trident/') > -1) {

window.location.href = 'ie.aspx?/path/to/dashboardPage.aspx';

}

So this fixed the issue of the dashboard not loading for IE users, but a new problem emerged. As users would navigate around from the dashboard page, all navigation would occur within the child iframe. This meant that:

- The IE address bar did not update as navigation occurred.

- Open with Explorer was broken again since the browser was now rendering all pages in IE=Edge mode.

In order to address this, I had to add some JavaScript to the master page to detect if the page is being loaded in an iframe. If it is, and it isn’t the page I need to load in an iframe, I redirect out of the iframe:

// Detect Internet Explorer

if (navigator.userAgent.indexOf('MSIE') !== -1 || navigator.appVersion.indexOf('Trident/') > -1) {

// If page is being loaded in an iframe from ie.aspx, redirect out of it

if (window.location.href != window.parent.location.href && window.location.href.indexOf('/ie.aspx') > -1 && window.location.href.indexOf('dashboardPage.aspx') == -1) {

window.parent.location.href = window.location.href;

}

}

I’m not thrilled with this solution, but it gets the job done. I would welcome any feedback or suggestions for improvement for people still stuck supporting old versions of SharePoint!

Deploy SharePoint Framework client-side web parts to SharePoint 2016 on-premises

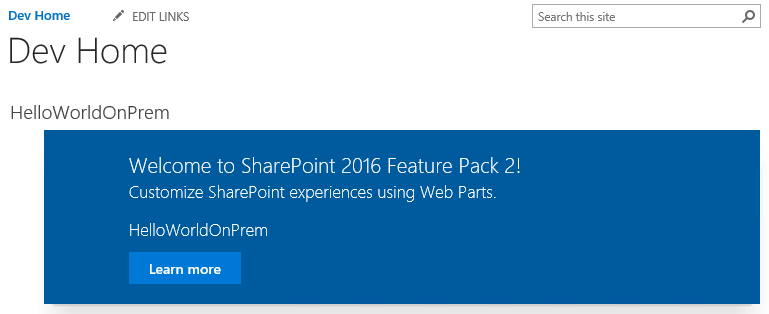

Earlier this month, Microsoft released Feature Pack 2 for SharePoint 2016. With this release came the long-awaited introduction of some SharePoint Framework capabilities on-premises, beginning with client-side web parts on classic pages (of course, there are no modern page experiences on-premises…yet).

So what do you need to do to start using client-side web parts on-premises? Follow the steps below!

Download and apply the September 2017 Public Update for SharePoint 2016

Of course it all starts with downloading and applying Feature Pack 2 (also known as the September 2017 Public Update for SharePoint 2016), which can be downloaded here. Once you’ve applied the Feature Pack, verify your configuration database version is 16.0.4588 in Central Administration:

Farm information shown on the Central Administration “Servers in Farm” screen.

Verify the farm configuration for apps/add-ins

If you are already using SharePoint add-ins and have completed the necessary steps to configure your on-premises environment for apps, you can skip this section. Otherwise, there is some infrastructure work that needs to be done before you can leverage the app/add-in model. Since SharePoint Framework packages must be uploaded to an app catalog, your on-premises farm must be configured for apps in order to use the SharePoint Framework. This is true even if you do not intend to leverage the add-in model (although you should definitely should consider it, as SharePoint Framework capabilities are complementary to–and not a wholesale replacement for–the add-in model).

At a high level, the steps you must perform include:

1. Creating the forward lookup zone and CNAME alias in DNS.

2. Configuring the Subscription Settings and App Management service applications.

3. Setting up app URLs in Central Administration.

4. Granting access to the app catalog to user(s) who may install your client-side web parts to a site (this is an important one and is definitely the first place to look if users trying to add them to a site don’t see them on the Add an app screen).

These steps are outlined in further detail here.

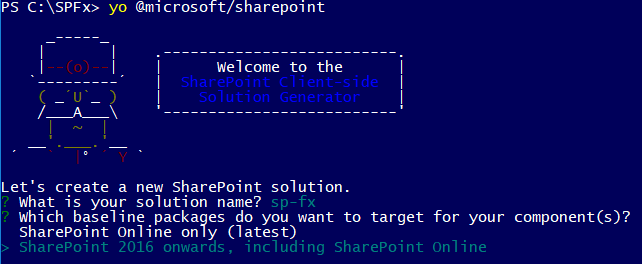

Update the Yeoman generator

Assuming you have already configured your SharePoint Framework development environment, ensure your Yeoman generator has been updated to version 1.3.0 or later. You can also develop client-side web parts for SharePoint 2016 on-premises using version 1.0.2 of the generator, but I would highly encourage you to update to the latest version of the generator using this command:

npm i -g @microsoft/generator-sharepoint

Beginning with v1.3.0 of the generator, you are given the option to choose whether your solution needs to support SharePoint 2016 on-premises and/or SharePoint Online:

Version 1.3.0 of the Yeoman generator now prompts you to choose between targeting SharePoint on-premises and/or SharePoint Online.

This is important because SharePoint Online will always have support for newer SharePoint Framework capabilities than what will be available on-premises and will therefore target different dependencies within its baseline packages.

Build, package, and deploy the client-side web part

This process is largely the same as developing client-side web parts for SharePoint Online. There is even a live online workbench for local testing available within your on-premises sites at _layouts/15/workbench.aspx (in addition to the local workbench that is always available when you run gulp serve).

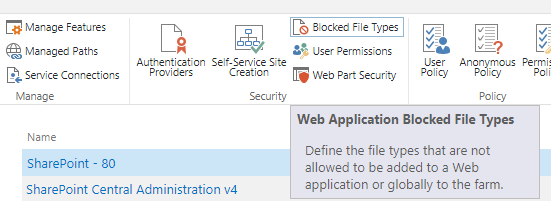

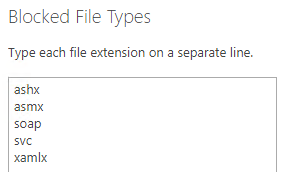

Before you build and package your solution, remember to update the cdnBasePath value in config/write-manifests.json to match the location where you intend to deploy your client-side web part’s assets. The files you will need to deploy are one .json and two .js files. NOTE: If you intend to deploy these assets to a SharePoint document library, you may need to remove .json files from the list of blocked file types. This may be done in Central Administration under Manage web applications by selecting Blocked File Types in the ribbon and removing json from the list:

Ensure that json does NOT appear in the list of blocked file type extensions if you intend to deploy your solution’s assets to SharePoint.

After you have deployed your solution’s assets, upload the .sppkg file from the sharepoint/solution directory to your app catalog. This will then make the solution available to install on sites where you wish to add the client-side web part.

Add the client-side web part to a site

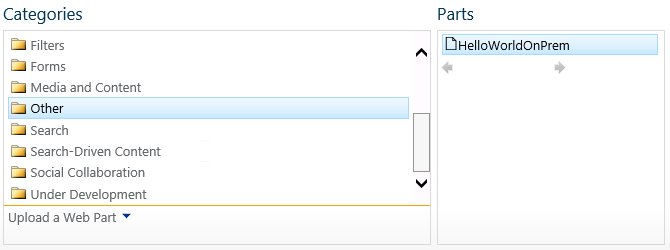

From the Add an app screen, select your solution. You will then be able to add the web part to a page by selecting Web Part from the Insert tab of the ribbon on a wiki or web part page. By default, your client-side web part will appear in the Other category:

SharePoint Framework client-side web parts can be added to the page just like any classic web part.

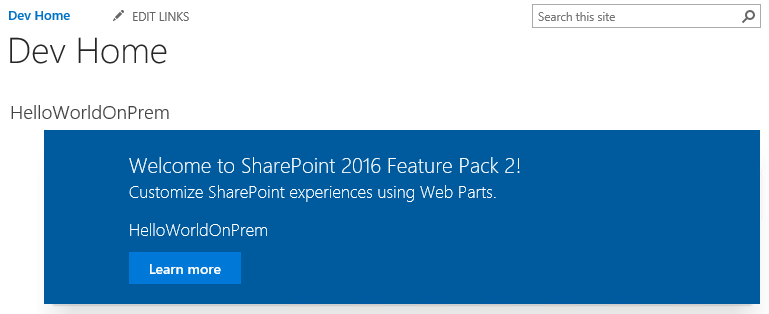

The web part will then appear on the page.

SharePoint Framework client-side web part added to a SharePoint 2016 on-premises wiki page.

Other notes

– Remember that you cannot add an app to a site when logged in as the System Account. Ensure you are signed in as a different user when adding the app to your site.

– The web part file’s properties, including its group, title, and description, can be changed by updating the [web part name].manifest.json file in the src/webparts/[web part name] directory.

– There will always be some capability gap between SharePoint Online and on-premises. The SharePoint team has committed to merging new SharePoint Framework capabilities into future on-premises releases, but don’t expect to see support for things like SharePoint Framework Extensions on-premises anytime soon.

– There is no on-premises support for tenant/farm-scoped deployment of client-side web parts. You must upload the .sppkg file to your on-premises app catalog(s) and manually add the app on each site where you need to leverage your client-side web part.

– If you have completed all of the appropriate farm configuration to support the add-in model but users don’t see your SharePoint Framework solutions from the Add an app screen, ensure you have explicitly granted those users access to your app catalog.

It is so exciting to have the first set of SharePoint Framework capabilities now available to on-premises users. Happy coding!

Custom modern page header and footer using SharePoint Framework, part 3

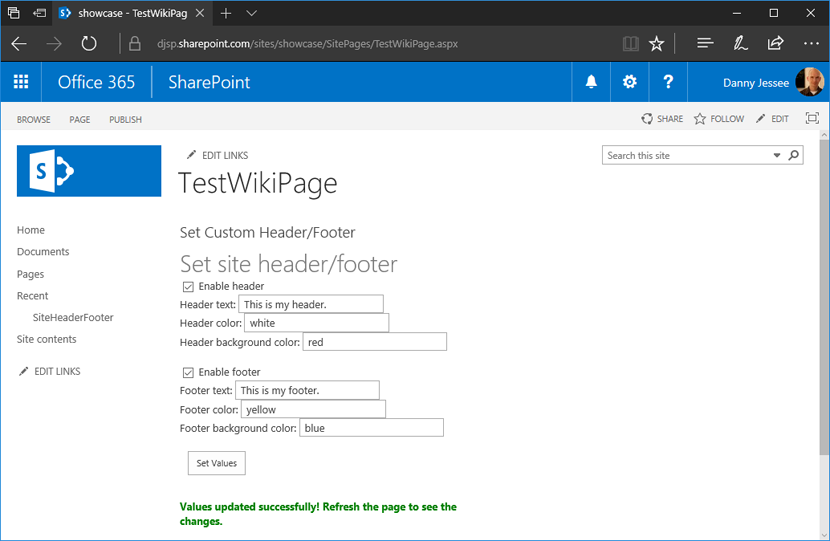

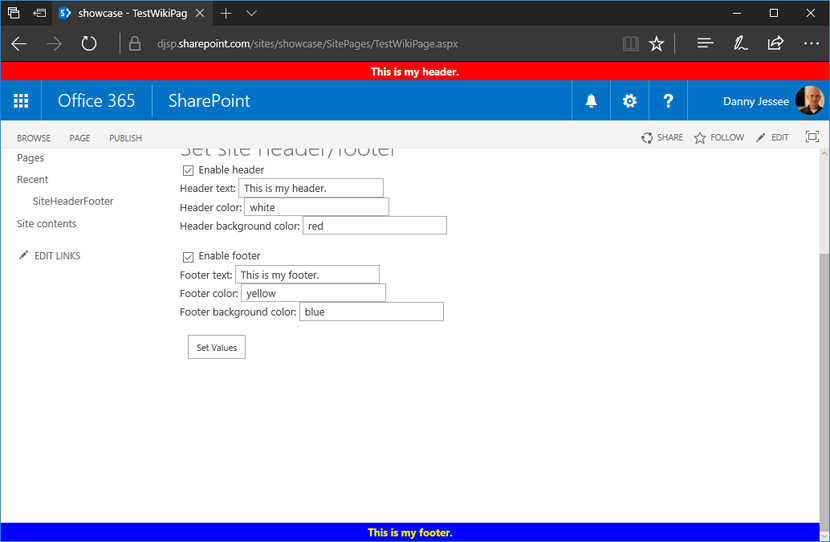

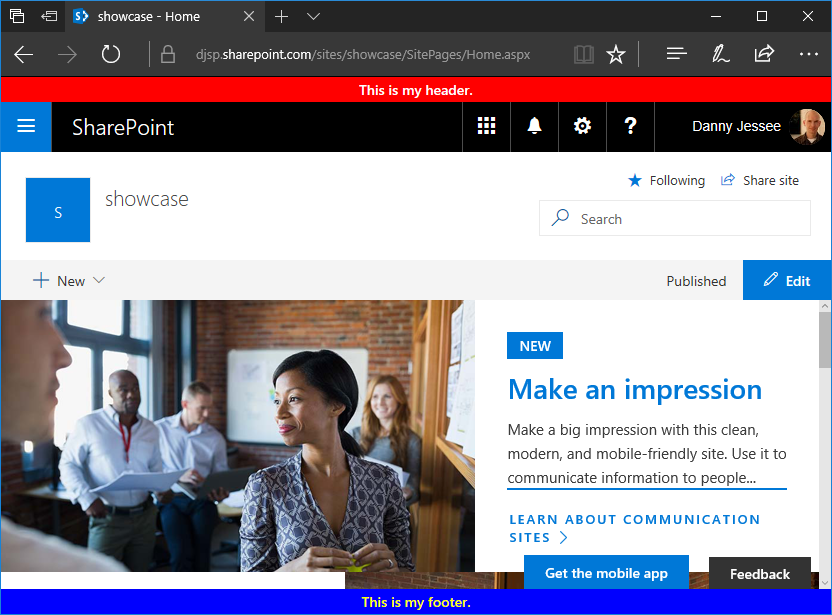

This post is part 3 of what is currently a 3 part series of updates to my SharePoint Framework application customizer as the capability has evolved since entering developer preview. I have updated this code a number of times since publishing part 1 back in June. You can follow the history of these updates by reading my earlier posts:

In this post, I will update my custom header and footer SharePoint Framework extension based on the changes required for RC0 (SPFx 1.2.0) as well as to support the new tenant-scoped deployment option for SharePoint Framework solutions.

Release Candidate now available

Changes are coming fast in the SharePoint Framework world! Last week, Microsoft announced the Release Candidate (RC0) for SharePoint Framework Extensions with SPFx v1.2.0. A few days prior, I noticed some page placeholder names had changed and my extension’s onRender() method was no longer being called:

Looks like big changes are happening with #SPFx page placeholders this morning! I'm seeing new names (Top, Bottom) on pages in modern sites.

— Danny Jessee (@dannyjessee) August 24, 2017

I noticed my header/footer extension stopped working this morning and saw this in the console. pic.twitter.com/Lfgjgd9eiA

— Danny Jessee (@dannyjessee) August 24, 2017

Still seeing PageHeader and PageFooter on modern page experiences in classic sites but my extension isn't working on them now, either. #SPFx https://t.co/nS4IsrIOt2

— Danny Jessee (@dannyjessee) August 24, 2017

Sure enough, my suspicions were confirmed in this Github issue and just a few short days later we had a new release candidate!

You can read the details about all of the changes in the RC release notes. Here’s a quick summary of what I needed to do to update my extension project:

1. Update package.json to reflect the updated versions of SPFx dependencies and devDependencies. Mikael Svenson has a great write-up of this process in this blog post. I essentially had to change any dependency on 1.1.1 to 1.2.0 instead.

2. Run gulp –upgrade (dash dash upgrade) to update my project’s config.json file.

3. Update my HeaderFooterApplicationCustomizer.ts to do the following:

– Leverage the updated placeholderProvider.

– Use updated placeholder names Top and Bottom as well as new types PlaceholderName and PlaceholderContent.

– Explicitly call onRender() from my onInit() method.

You can examine all the differences in my code changes here.

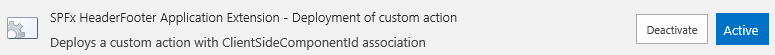

Update for tenant-scoped deployment

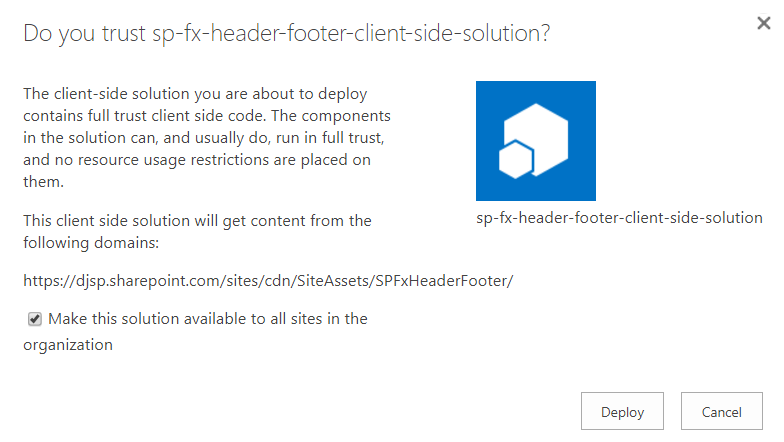

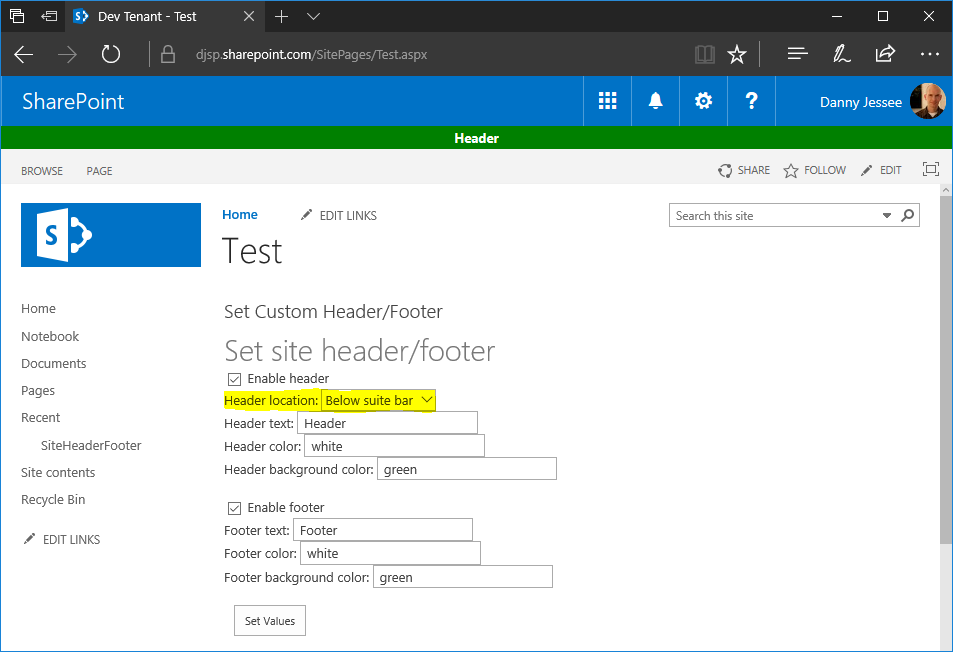

Even before this week’s RC0 announcement, I knew I already had to do some work to update my extension. While I was attending SharePoint Fest Seattle last month, Microsoft announced the ability to perform tenant-scoped deployments for SharePoint Framework parts and extensions. This is a great update that eliminates the need to manually install the “app” associated with my extension on every site. Instead, when deploying the package to my tenant’s app catalog, I can check a box to automatically make the solution available to all sites within my tenant (or “organization”):

To add support for tenant-scoped deployment, I added the following line to my package-solution.json file:

“skipFeatureDeployment”: true

This marks the solution as being eligible for tenant-scoped deployment. Note that I still left the feature definition present in this file. This enables the solution to still be installed on individual sites via Site Contents > Add an app should the tenant administrator decline to perform a tenant-scoped installation.

Adding the user custom action for the extension in a tenant-scoped deployment

If you check the box labeled Make this solution available to all sites in the organization before pressing Deploy, you will need to manually add the user custom action associated with the extension on any site where you would like the custom header and footer to be rendered on modern pages. If you deploy the extension at tenant scope, it is immediately available to all sites and you do not need to explicitly add the app from the Site Contents screen. However, because tenant-scoped extensions cannot leverage the feature framework, you will need to associate the user custom action with the ClientSideComponentId of the extension manually. This can be accomplished a number of different ways. Some example code using the .NET Managed Client Object Model in a console application is shown below:

using (ClientContext ctx = new ClientContext("https://[YOUR TENANT].sharepoint.com"))

{

SecureString password = new SecureString();

foreach (char c in "[YOUR PASSWORD]".ToCharArray()) password.AppendChar(c);

ctx.Credentials = new SharePointOnlineCredentials("[USER]@[YOUR TENANT].onmicrosoft.com", password);

Web web = ctx.Web;

UserCustomActionCollection ucaCollection = web.UserCustomActions;

UserCustomAction uca = ucaCollection.Add();

uca.Title = "SPFxHeaderFooterApplicationCustomizer";

// This is the user custom action location for application customizer extensions

uca.Location = "ClientSideExtension.ApplicationCustomizer";

// Use the ID from HeaderFooterApplicationCustomizer.manifest.json below

uca.ClientSideComponentId = new Guid("bbe5f3fa-7326-455d-8573-9f0b2b015ff9");

uca.Update();

ctx.Load(web, w => w.UserCustomActions);

ctx.ExecuteQuery();

Console.WriteLine("User custom action added to site successfully!");

}

If you decline to check the box to allow tenant-scoped installation when you upload the .sppkg file to the app catalog, the extension will still be available to manually add to any site via Site Contents > Add an app. If you manually add the extension to a site in this way, the user custom action registration will be handled automatically via the feature framework (as it has in the past). No additional code is necessary to register the user custom action in that case.

Version 1.1.4.0 now available!

All of my updated code (RC0 updates and support for tenant-scoped deployment) can be found within my Github repository at:

Custom modern page header and footer using SharePoint Framework, part 2

This post is part 2 of what is currently a 3 part series of updates to my SharePoint Framework application customizer as the capability has evolved since entering developer preview in June. Please see the links to parts 1 and 3 below.

When I first released my custom modern page header and footer using SharePoint Framework in June, there was one significant shortcoming with the built-in page placeholders on modern page experiences that made them impractical to use for a site-wide header and footer solution: modern site pages did not contain a PageFooter placeholder.

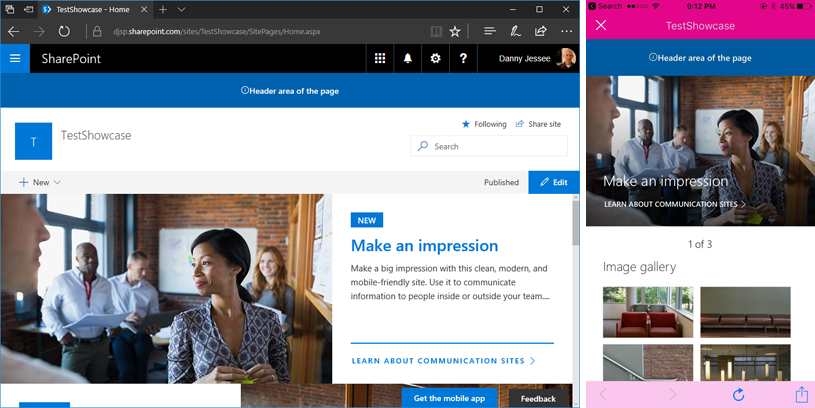

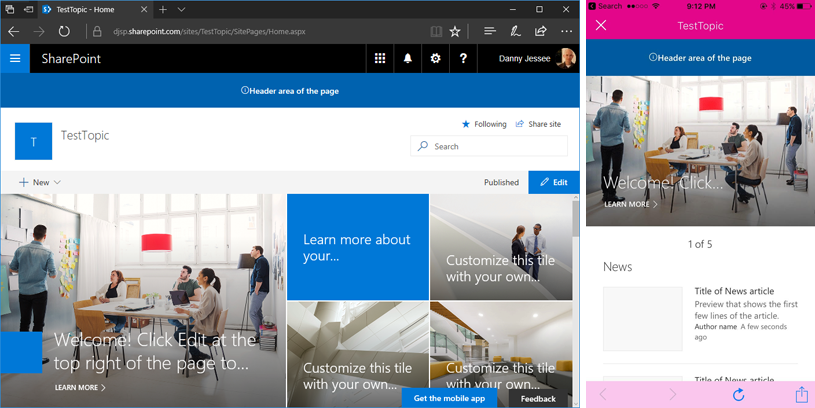

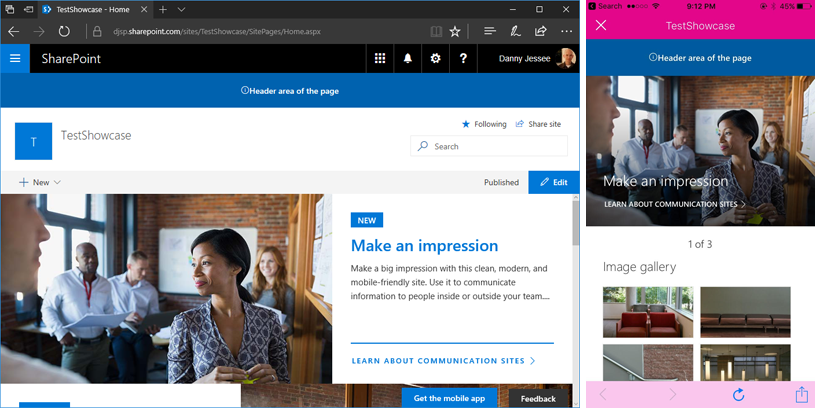

When application customizers were initially rolled out, modern site pages did not contain a PageFooter placeholder. Image from June 2017.

However, on or around August 11th, I noticed that the PageFooter placeholder started appearing on modern site pages. With this change, all modern page experiences now had PageHeader and PageFooter placeholders.

Modern site pages now contain a PageFooter placeholder! Image from August 2017.

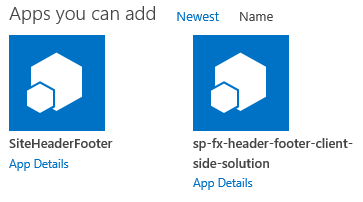

Update to the SharePoint Framework application customizer

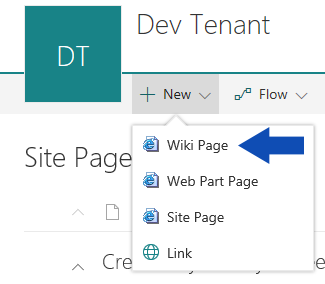

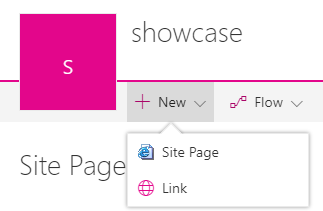

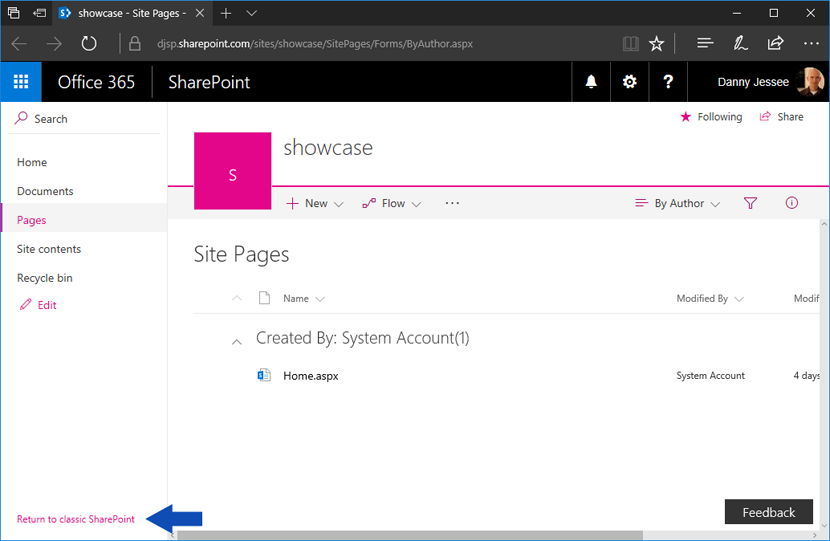

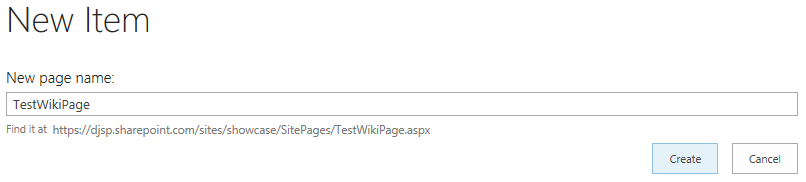

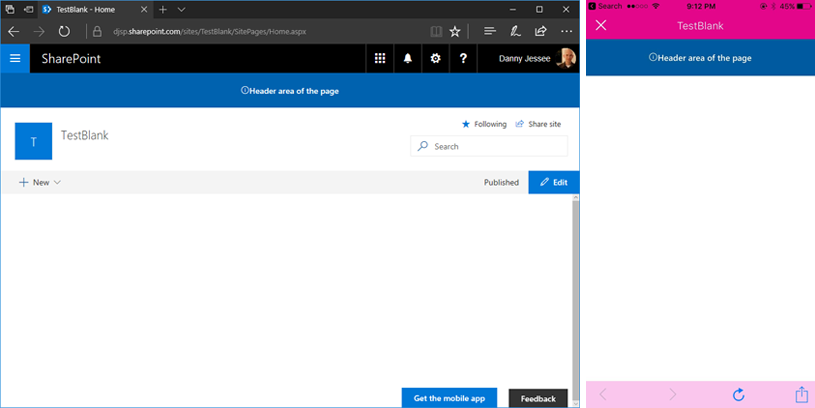

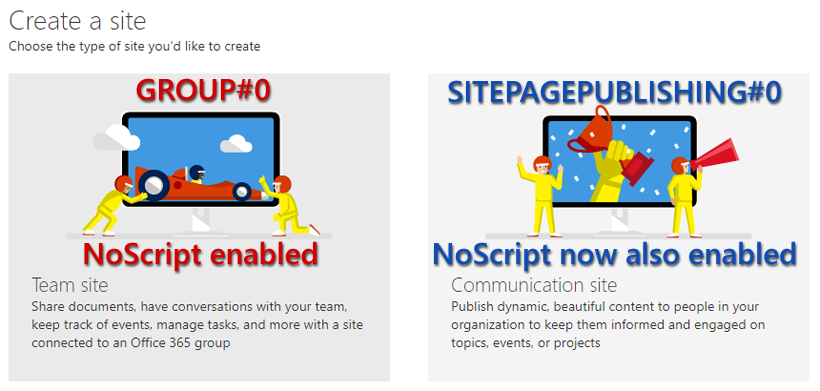

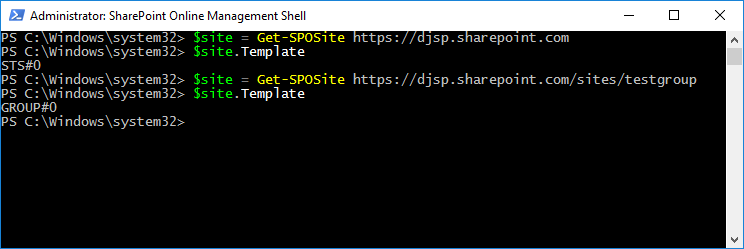

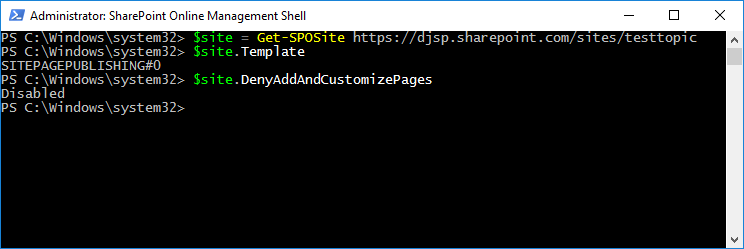

My original SPFx application customizer could not make use of the built-in page placeholders because at the time, modern site pages did not contain a PageFooter placeholder. Also, the PageHeader placeholder was located beneath the Office 365 suite bar instead of above it, which is where I had chosen to inject my custom header for classic pages using a SharePoint-hosted add-in. Because of this, I relied on fragile and unsupported DOM manipulation techniques that would break if Microsoft ever changed the class names or IDs of the main page content areas (modern site pages currently use a I decided that having the page header beneath the suite bar was a worthwhile tradeoff to be able to leverage the built-in page placeholders and eliminate all of the ugly DOM manipulation I was previously doing. The updated code can be found in the HeaderFooterApplicationCustomizer.ts file contained within my Github repository at: https://github.com/dannyjessee/SPFxHeaderFooter Update to the SharePoint-hosted add-in Since I decided to leverage the built-in PageHeader placeholder on modern pages beneath the suite bar, for the sake of consistency I also updated my SharePoint-hosted add-in to provide the option to inject the custom header in the same location on classic pages: My SharePoint-hosted add-in now offers the ability to select whether to render the header above or below the suite bar. Code for the updated SharePoint-hosted add-in may be found on Github at the following location: https://github.com/dannyjessee/SiteHeaderFooter Note that you must disable NoScript (if it is enabled) in order to install and utilize this add-in. For more information about NoScript and how to disable it, check out my post on NoScript and modern sites. Putting it all together With the SharePoint-hosted add-in and SharePoint Framework application customizer both installed on the same site, you can render a custom header and footer consistently across all classic and modern page experiences within that site. Custom header and footer rendered on a classic page experience within a communication site using a SharePoint-hosted add-in. Custom header and footer rendered on a modern page experience within a communication site using a SharePoint Framework application customizer and page placeholders. In just a few short weeks (August 8-11), I will be joining SharePoint experts from around the world at SharePoint Fest Seattle 2017! I am very excited to be debuting a new session that will take a lighthearted, but detailed look at how to attempt to achieve consistency when branding the mix of classic and modern page experiences within SharePoint Online sites, modern team sites, and recently unveiled communication sites using the SharePoint Framework along with techniques from the SharePoint add-in model: DEV 206 – Apply Consistent UI Customizations Across Classic and Modern Pages in SharePoint Online SharePoint Online sites are currently made up of a hodgepodge of classic and modern page experiences. A site’s home page is a classic page while the site contents page is a modern page. Page experiences may vary between pages, lists, and libraries as users navigate a site. Although the SharePoint Framework allows you to build client-side web parts to customize both classic and modern pages, a combination of the add-in model and SharePoint Framework application customizers must be used to embed JavaScript-based UI customizations across both page types. In this demo-intensive session, you will learn how to brand a SharePoint Online site with a customizable page header and footer that looks identical across classic and modern pages. If you register now and use code JesseeSeattle100 at checkout, you can save $100! My session is Friday, August 11th at 3:30 in Breakout 8. This will be my fourth SharePoint Fest and my second SharePoint Fest Seattle. I look forward to seeing you there! Although the title of this post indicates the particular objective I achieved, this post walks through the steps that are necessary for any scenario that requires you to have access to the classic page experience on a communication site or modern team site in SharePoint Online. In my case, the reason for this is to leverage a “legacy” app part on a classic wiki page that allows users to configure settings for a custom page header and footer. Unlike “classic” SharePoint Online sites, communication sites and modern team sites do not give you the ability to create a new wiki page in the Site Pages library that allows you to add “legacy” web parts. SharePoint Framework client-side web parts are the only custom web parts supported on modern page experiences. Microsoft didn’t necessarily make it “easy” to do all of this, but it is possible and the process is simple if you follow the steps below. Step 1 (modern team sites only): Disable NoScript This step is required because my app part uses custom script to access and manipulate values stored in the site property bag. In this post, I explain the differences between modern team sites and communication sites when it comes to the default NoScript setting and show how you can query a site’s NoScript status and change it if necessary. Because communication sites are not NoScript sites (apologies for the double negative), you do not need to perform this step on them. Step 2: Install the SharePoint-hosted add-in My SharePoint-hosted add-in surfaces an app part that reads configuration settings for the custom header and footer from the site property bag and allows users to update them. I built the .app package for the add-in and deployed it to my tenant’s app catalog site. This made it available to install in my communication site and modern team site: After installing my SharePoint-hosted add-in’s SiteHeaderFooter.app file and my SharePoint Framework Application Customizer Extension’s sp-fx-header-footer.sppkg file to my tenant’s app catalog site, both are available to install on my new communication site. We will install the SharePoint Framework Application Customizer Extension in step 4 below. Notice how the addanapp.aspx page retains the “classic” page experience. This was my first clue that it would still be possible to unlock other classic page experiences–such as a classic wiki page–within these modern sites. Step 3: Create the classic wiki page and add the “legacy” app part If you navigate to the Site Pages library in a “classic” site, you will see the option to create a new Wiki Page within that library: The Site Pages library within “classic” sites still allows you to create Wiki Pages with the classic page experience. However, if you navigate to the Site Pages library in a communication site or modern team site, this option does not exist: The Site Pages libraries within communication sites and modern team sites do not expose the option to create new Wiki Pages. How can we add a new wiki page to the Site Pages library if the option isn’t there? By clicking the “Return to classic SharePoint” link that appears in the lower left corner of the screen: There is much to be said about what happens when you click that link, but for our purposes it does exactly what we need (at least for the duration of our session). When viewing the same Site Pages library returned to the classic page experience, clicking the New link now prompts you for a new page name: Specifying the page name and pressing Create gives us a new “classic” wiki page to which we can add the app part from the SharePoint-hosted add-in. Once that is done, I can configure the custom header and footer parameters and press Set Values: Refreshing the page will display the header and footer, since this is a classic page experience that supports the user custom actions used by the add-in to embed the JavaScript responsible for rendering the header and footer: Installing and configuring the SharePoint-hosted add-in handles the rendering of the header and footer on all classic page experiences within a site. However, the site home page will still not display the header and footer because modern page experiences do not support user custom actions. That’s where the SharePoint Framework Application Customizer Extension comes into play. Step 4: Install the SharePoint Framework Application Customizer Extension Return to the addanapp.aspx page we visited earlier when installing the SharePoint-hosted add-in to our site. This time, choose to add the sp-fx-header-footer-client-side-solution. You can read all about this SharePoint Framework Application Customizer Extension and how it works in this blog post. This will activate the associated site-scoped feature that renders the header and footer on modern page experiences. You can confirm this by going to Site Settings and ensuring the SPFx HeaderFooter Application Extension – Deployment of custom action feature is Active: When this feature is Active, the JavaScript associated with our SharePoint Framework Application Customizer Extension will execute on all modern page experiences. Step 5: Enjoy the results Below you can see my custom header and footer applied on both a new communication site and a modern team site: Custom header and footer rendered on a communication site. Custom header and footer rendered on a modern team site where I followed the same steps performed on the communication site in the steps above. Key takeaways – By returning to classic SharePoint, it is still possible to add new wiki pages to the Site Pages library in communication sites and modern team sites in SharePoint Online (where the option to create them is not shown by default). The original post from July 1, 2017 appears below. Earlier this month, Microsoft launched the developer preview of SharePoint Framework Extensions. I examined the available page placeholders on modern list and library pages, the modern Site Contents page, and modern site pages in this blog post. With the rollout of SharePoint communication sites now underway, I thought I would take this opportunity to confirm the available page placeholders on each of the three new communication site designs: – Topic Communication sites are based on the SITEPAGEPUBLISHING#0 template and feature a new responsive modern site page for the site home page. Based on my previous research, it came as no surprise that all three modern communication site page designs contain the PageHeader placeholder but not the PageFooter placeholder. Below are screenshots of all three communication site designs in Edge and the SharePoint mobile app, reflecting the custom content rendered in the PageHeader placeholder after deploying the SharePoint Framework Hello World Application Customizer Extension. Topic Showcase Blank (8/13/2017) NOTE: All three communication site designs–as well as all modern site pages in general–now also contain the PageFooter placeholder. What if I also need a custom footer on these modern communication site pages? I recently implemented a custom header and footer SharePoint Framework Application Customizer Extension that was based on a custom header and footer SharePoint-hosted add-in I developed back in 2015. This particular extension works great on the modern page experiences within my “classic” SharePoint Online site because I am able to leverage the SharePoint-hosted add-in part (on a classic wiki page) that maintains the custom header and footer configuration parameters in the site property bag. In my next post, I will show you how to create a classic wiki page in a modern communication site (unlike in classic sites, you can’t just go to New > Wiki Page in the Site Pages library) to leverage the SharePoint-hosted add-in part to configure the custom header and footer configuration parameters in the site property bag. My SharePoint Framework Application Customizer Extension will then read these values from the property bag and render the custom header and footer. As an added bonus, I will also show you the extra step necessary to accomplish this on a modern team site, a so-called “NoScript site” that does not allow custom script to access the site property bag by default. See below for more history. UPDATE 8/1/2017: Newly created communication sites are still NoScript sites by default; however, as of today I am again able to disable NoScript on them by running the Set-SPOSite cmdlet shown in the post below. See this Github issue for more information. UPDATE 7/22/2017: It appears that newly created communication sites are also NoScript sites by default, and I am currently receiving an error when I try to disable NoScript on one: I'm currently getting an error when trying to disable NoScript on a new #SharePoint Online communication site in my dev tenant. pic.twitter.com/yC3Mxymc1w — Danny Jessee (@dannyjessee) July 22, 2017 However, I can still disable NoScript on a new #SharePoint Online modern team site without any issues in my dev tenant. pic.twitter.com/iAMhg5SvDb — Danny Jessee (@dannyjessee) July 22, 2017 The original post from July 1, 2017 appears below. While new SharePoint Online communication sites continue to roll out across First Release – Select Users tenants, I discovered an interesting difference between them and modern team sites. Modern team sites have been around since late 2016 and are connected to an Office 365 Group when they are created. They are also so-called “NoScript sites” by default, which affects the types of customizations that can be applied to them. They use the GROUP#0 template and not the STS#0 template used by “classic” team sites (which can still contain modern page experiences…confused yet?) My “classic” team site uses the STS#0 template while my “modern” team site uses the GROUP#0 template. Some of the original guidance suggested that NoScript could not be disabled on modern team sites, but earlier this week Microsoft decided to officially support disabling NoScript on modern team sites. The documentation on MSDN has been updated to reflect this as well. Checking the NoScript status of a site You can query a site’s DenyAddAndCustomizePages property via PowerShell: If the value is Enabled, your site is a NoScript site. To disable it, run the following cmdlet: Disabling NoScript allows users to run custom scripts on a site and allows custom script to do things such as access a site’s property bag. This may come in handy if you happen to have a solution that requires this. Stay tuned for more about that! What about communication sites? Since communication sites are new modern sites, you might expect them to be NoScript sites as well. However, this is not the case. Communication sites use the SITEPAGEPUBLISHING#0 template and come with the DenyAddAndCustomizePages property Disabled out of the box: My communication site uses the SITEPAGEPUBLISHING#0 template and already has NoScript disabled. In summary: (8/13/2017) NOTE: Both modern team sites and communication sites now have NoScript enabled by default. In either case, NoScript can be disabled by running the appropriate Set-SPOSite cmdlet detailed above. In an upcoming post, I will leverage this knowledge to apply my custom header and footer SharePoint Framework Application Customizer Extension to both modern team sites and communication sites. In my last post, I showed how you must click Return to classic SharePoint in order to remove an app from SharePoint Online’s modern Site Contents page. Clicking Return to classic SharePoint sets a session cookie named splnu with a Content attribute value of 0: As long as this cookie is present, pages that would typically render using the modern page experience (such as the Site Contents page) will use the classic page experience instead. But did you catch something about that cookie in the screenshot above? It is set with a Path attribute value of /: Based on this explanation of the purpose of a cookie’s Domain and Path attribute values as defined in RFC 6265, the value of the Path attribute can be used to scope the cookie to a particular URL (and its subdirectories). However, when it is set to /, the browser will use the cookie for any path within the cookie’s domain. From any site, it affects every site It turns out that the splnu cookie’s Path attribute is set to / no matter which site or subsite you choose to return to the classic page experience. I tested this by clicking Return to classic SharePoint from each of the following sites within my tenant (clearing my cookies between each test): – https://djsp.sharepoint.com (root site collection) In all of these scenarios, the same splnu cookie was created with the same Path attribute of /, no matter the subdirectory from which the request was initiated. What does this mean? For the duration of your session (splnu is a session cookie), you will be returned to the classic SharePoint page experience on ALL sites that share the same parent domain (in my example above, the root site collection, subsite, and private site collection underneath the /sites path all share the same parent domain–djsp.sharepoint.com–and are therefore all matches for the Path of /). This may not be a major issue for you since the splnu cookie is only valid for the duration of your session, and as I suggest in my last post, the effects of this cookie can be mitigated by using your browser’s “private browsing” or “incognito” mode. However, this behavior may cause confusion since you might expect the Return to classic SharePoint link to only apply within the scope of the particular site where you selected it. To that end, I have submitted this UserVoice request asking the product team to consider appropriately scoping the Path attribute value of the splnu session cookie based on where the user has asked to return to the classic page experience. Feel free to vote or comment on my request.

Speaking at SharePoint Fest Seattle 2017!

Applying a custom header and footer on communication sites and modern team sites

– Restoring this classic page experience to modern sites allows you to leverage “legacy” app parts that were built using the add-in model without having to rebuild the functionality using a SharePoint Framework client-side web part (NOTE: this is probably something you will still want to do in most cases).

– Even when your “Return to classic SharePoint” session cookie expires, your classic wiki page will remain in the Site Pages library and is still completely accessible.

– Applying branding such as a custom header and footer to modern sites–especially those that contain a combination of classic and modern page experiences–will require a multifaceted approach that may include capabilities from the SharePoint add-in model as well as SharePoint Framework.

SharePoint Framework page placeholders on new communication sites

UPDATE 8/13/2017: As of August 11, 2017, all modern site pages also contain the PageFooter placeholder.

– Showcase

– Blank

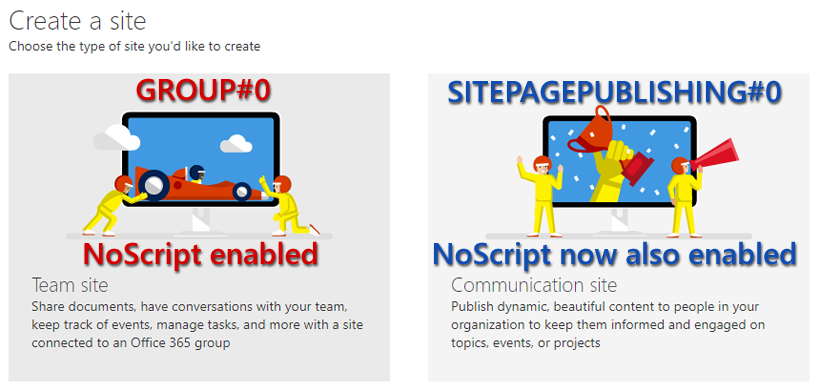

SharePoint Online modern team sites and communication sites are NoScript sites

UPDATE 8/13/2017: As it now appears that communication sites will be NoScript sites by default, I have updated the title of this post and the image at the bottom of the original post to reflect this change and avoid any confusion.

$site = Get-SPOSite https://yoursite.sharepoint.com

$site.DenyAddAndCustomizePages

Set-SPOSite https://yoursite.sharepoint.com -DenyAddAndCustomizePages 0

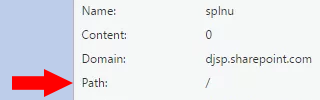

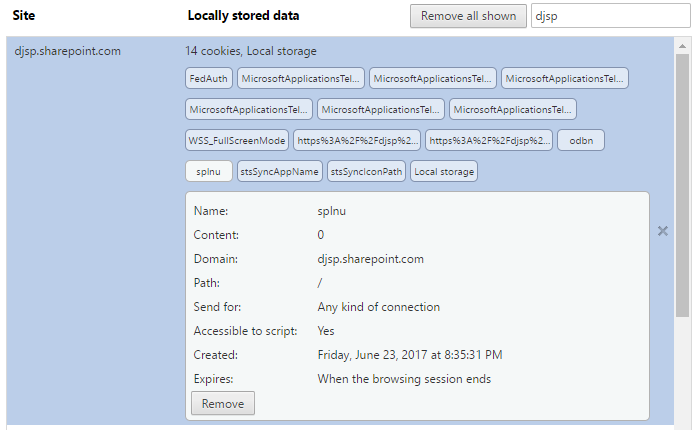

The “opt out of modern experiences” (splnu) cookie applies to all sites in your tenant subdomain

– https://djsp.sharepoint.com/test (subsite of root site collection)

– https://djsp.sharepoint.com/sites/test (root of new private site collection)